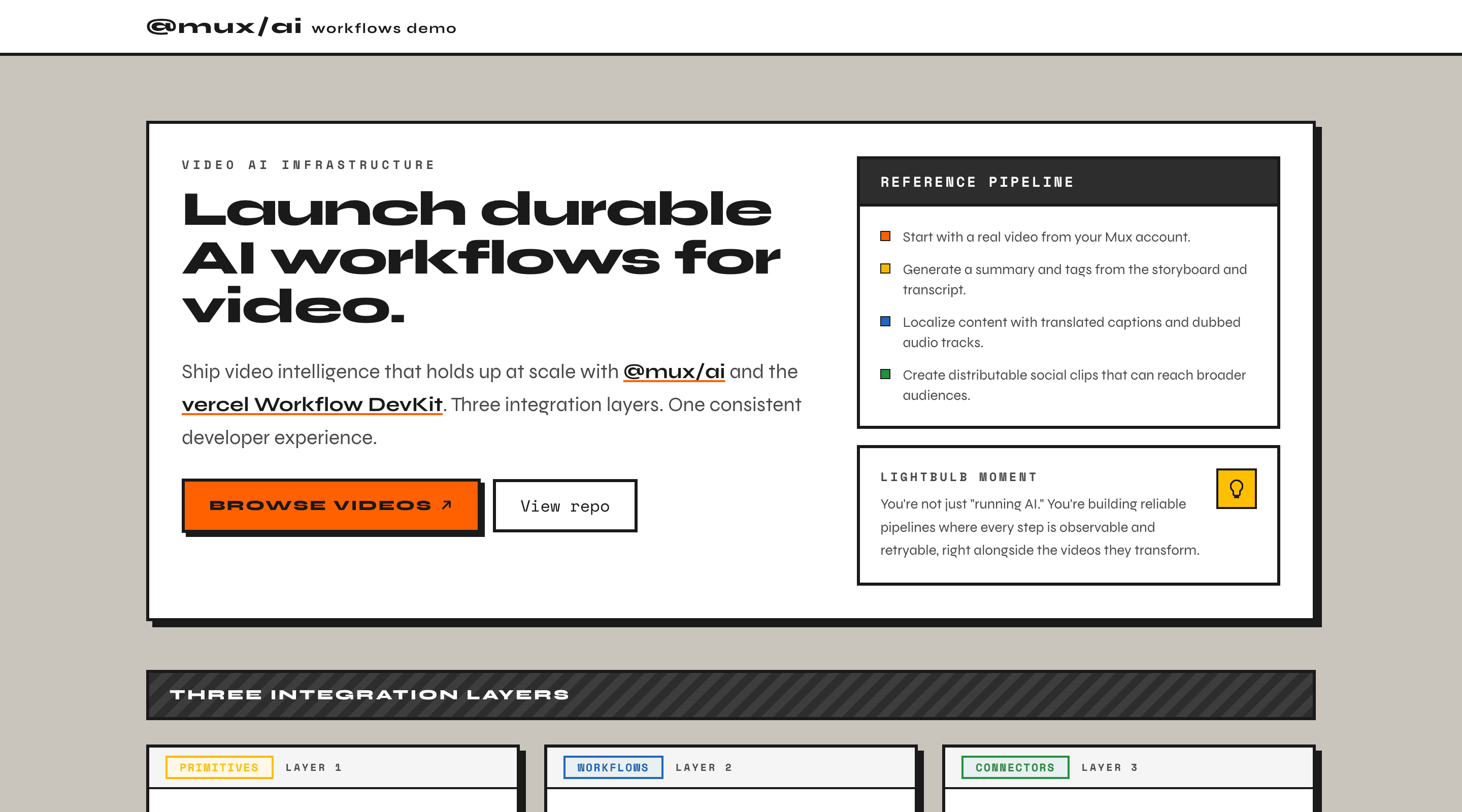

@mux/ai + Vercel Workflows Starter

A Next.js starter template demonstrating how to build durable video AI pipelines with @mux/ai and the Vercel Workflow DevKit.

🚀 Deploy to Vercel

Three Integration Layers

| Layer | Pattern | Example |

|---|---|---|

| 1. Primitives | Call functions directly | getSummaryAndTags() — instant results |

| 2. Workflows | Run durably via Vercel Workflows | translateCaptions, translateAudio — retries, progress tracking |

| 3. Connectors | Compose with external tools | Clip creation with Remotion — multi-step pipelines |

Resumable workflows (try it)

This project showcases resumable, durable workflows out of the box:

- Start a workflow (captions, dubbing, or summary).

- Refresh the page, or navigate away and back.

- You should see the workflow still running asynchronously, with status rehydrated from browser

localStorage.

Quick Start

Inspect workflow runs locally:

Rate Limiting

This demo includes IP-based rate limiting to protect against excessive API costs. Limits are automatically bypassed in development mode.

| Endpoint | Limit | Window |

|---|---|---|

translate-audio | 3 | 24h |

translate-captions | 10 | 24h |

render | 6 | 24h |

summary | 10 | 24h |

search | 50 | 1h |

See DOCS/RATE-LIMITS.md for implementation details and maintenance.

Remotion support

Remotion is used within this example app for composing @mux/ai with video rendering.

Local Development

Production Deployment

Note:

remotion:deploybundles and deploys your Remotion site to AWS Lambda for production video rendering. This is not for development — useremotion:studioandremotion:render:localfor local dev and testing.

Automated Deployment

Remotion is automatically deployed to AWS Lambda when changes to remotion/ are merged into main. See DOCS/AUTOMATED-REMOTION-DEPLOYMENTS.md for details.

Environment Variables

See AGENTS.md for the full list. At minimum you'll need:

Database setup + importing your Mux catalog

This project stores your Mux catalog metadata in Postgres and generates pgvector embeddings for semantic search.

The database schema and migrations are managed with Drizzle (see db/schema.ts and db/migrations/), and the db:* scripts use Drizzle Kit.

1) Configure your database connection

Create a .env.local file (this is what both Drizzle and the import script load):

Your Postgres must support

pgvector. The first migration will runCREATE EXTENSION IF NOT EXISTS vector;.

2) Run database migrations

Apply the migrations in db/migrations/ (creates tables + indexes and enables pgvector):

3) Import Mux assets (and generate embeddings)

This fetches all ready Mux assets with playback IDs, upserts rows into videos, and writes embedding rows into video_chunks.

To embed subtitles from a specific captions track language, pass --language (defaults to en). This should match the language of an existing captions track on the source Mux asset — it does not translate captions:

4) Understand the database scripts

npm run db:generate: Generates new migration files fromdb/schema.ts(use this after changing the schema).npm run db:migrate: Applies migrations to the database defined byDATABASE_URL.npm run db:studio: Opens Drizzle Studio to inspect tables/rows locally (also usesDATABASE_URL).

Media Detail Page Structure

The media detail page (/media/[slug]) is organized into co-located feature folders:

Learn More

context/application-explained.md— what the app does and whycontext/design-explained.md— visual design and UX patternscontext/implementation-explained.md— routes, data model, and code patternsAGENTS.md— guidance for AI coding assistantsDOCS/RATE-LIMITS.md— rate limiting configuration and maintenance

See Also

pgvector— vector embeddings and similarity search for Postgres